Part III – The Infrastructure Arms Race: Giga-Scale Economics

By Bhanu Nallagonda, Cofounder, Ogha Technologies

March ‘26

If the economics of AI are abstract, the physical manifestation of the industry in 2025 is concretely, overwhelmingly massive. The race to AGI has morphed into a race for gigawatts. The era of the megawatt data centre ended in 2024 and 2025 clearly started the era of the Gigawatt Campus.

The Giga-Projects: Redrawing the Map

Tech giants are no longer building data centres; they are building cities of compute.

Project Stargate (Microsoft/OpenAI)

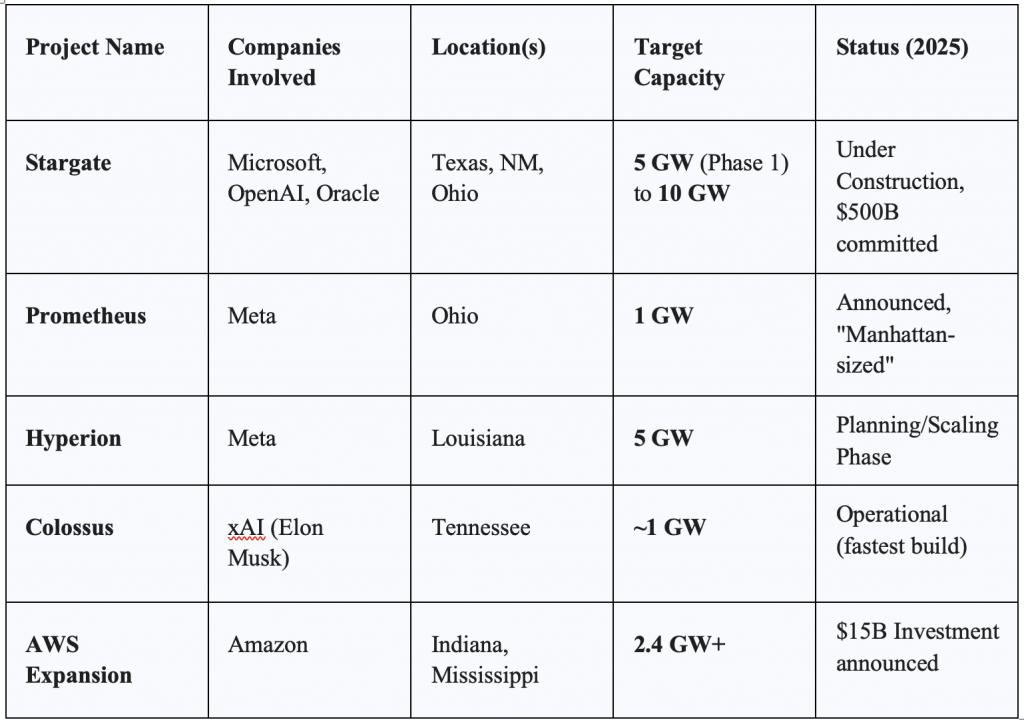

The most ambitious of these projects is “Stargate,” a $500 billion infrastructure initiative. By late 2025, OpenAI and Microsoft, in partnership with Oracle and SoftBank, were developing five new sites across the US (Texas, New Mexico, Ohio, and the Midwest).

- Scale: The project targets 10 gigawatts of capacity. For context, a typical nuclear reactor produces about 1 gigawatt (alright, we are going to have SMRs, Small Modular Reactors as well). Stargate is essentially building an energy infrastructure equivalent to ten nuclear power plants solely for AI.

- Strategic Importance: This project is designed to house millions of next-generation GPUs, including the forthcoming Nvidia Rubin architecture. It represents a bet that compute power is the ultimate commodity of the 21st century.

Subsequently it underwent some changes and OpenAI reportedly scrapped its plans to own and build its own dedicated data centers. Its flagship site at Texas is partially operational and others are in various stages.

Meta’s Prometheus and Hyperion

Not to be outdone, Meta announced its “Prometheus” supercluster in Ohio (1 GW) and the “Hyperion” cluster in Louisiana, designed to scale to 5 GW! Mark Zuckerberg described these facilities as having the “footprint of Manhattan”, a physical testament to the company’s pivot to “Superintelligence Labs”. Unlike Microsoft’s cloud-focused approach, Meta’s infrastructure is largely dedicated to training its open-source Llama models and powering its consumer AI products. While OpenAI and Microsoft pivoted to renting, Meta is still doubling down on owning.

Amazon and Google

Amazon Web Services (AWS) committed to a 2.4 GW expansion in Indiana alone, part of a $15 billion investment plan for the region. Google, meanwhile, aggressively acquired power infrastructure, buying Intersect Power for $4.75 billion to secure clean energy for its AI loads.

The Energy Bottleneck and the Nuclear Pivot

The sheer energy density of these projects has collided with the realities of the power grid. In 2025, data centres are projected to consume significant percentages of national electricity output in countries like Ireland and regions like Northern Virginia. This has forced a radical diversification of energy sourcing.

The Nuclear Renaissance: To bypass grid congestion, tech giants turned to nuclear power. Microsoft inked a historic deal to restart Three Mile Island Unit 1, effectively buying the plant’s entire output for decades. Simultaneously, OpenAI-backed Oklo pushed forward with plans for Small Modular Reactors (SMRs), aiming for deployment by 2027-2028. Oklo has received its initial regulatory approvals this month. The Department of Energy (DOE) has set a target for Oklo to achieve criticality (a self-sustaining nuclear reaction) at its pilot reactor by July 4, 2026. Oklo’s stock price has corrected a lot in the meanwhile.

Gas as a Bridge: With nuclear projects taking years to spool up, the immediate demand is being met by natural gas. “Behind-the-meter” gas plants—turbines installed directly at the data centre site—became the standard for rapid deployment. xAI pioneered this approach with its “Colossus” cluster, using rented gas turbines to bring 100,000 GPUs online in months rather than years.

The Infrastructure Giga Projects of 2025

Gigawatt Data Centre Economics

Here is a quick back of the envelope calculation for a GW AI data center:

Building a GW facility requires approximately $50 B of upfront capital with estimates varying from 35 to 60 billions of US dollars. With the latest Nividia’s Vera Rubin (GB300) clusters, it can go up to $65B as well!

Out of this, the lion’s share of 45% or more (48% for Vera Rubin) can go to the compute or GPUs.

Construction and Cooling takes about 25% of the capex, for specialized liquid cooling and lower for air cooling.

Electrical and Infrastructure costs about 20-23%.

High bandwidth Networking requires about 7-10%, with higher percentage for a million+ GPUs

Monthly Opex could touch a billion dollars or about 12B dollars per annum, including the depreciation with a 5-year replacement cycle. Incidentally, power transmission fees have gone up globally in 2026. Obsolescence rates are very high causing nervousness, but there are also instances where they can plod on. There are higher obsolescence rates when performance improvements are made, for e.g. performance-per-watt of a 2026 Vera Rubin chip is nearly 50x that of a 2023 Hopper chip, so facilities “plodding on” with older gear become too expensive to run relative to the tokens they produce!

With an estimated annual throughput of 1.6 quadrillion tokens per annum at 70% utilization, the production cost per million tokens would be about $7.

However, there are many variants that come into picture, with the input costs brought down with various optimizations, efficient compute, better models, extending the life (older TPUs are still not shut down due to high demand for compute) and so on. It may not be profitable for commodity/consumer chats, but for high value reasoning models and enterprise use cases.

Specialized hardwired chips/ASICs can bring this cost down to below a dollar. The companies which have the full stack – processors, models, data centres, software eco-system, applications are going retain much of the profit of all the layers, with the lion’s share being at the heart, the chips.

On February 1, 2026, in the Budget speech, the Finance Minister Nirmala Sitharaman introduced the most aggressive digital infrastructure incentive in India, by offering a tax holiday until 2047, for any foreign company providing cloud services (SaaS, IaaS, PaaS) to global customers using the data centres located in India and their global revenue will be exempt from Indian income tax until the year 2047. To qualify, the foreign entity must serve its global clients from Indian soil and the tax holiday is only available to those using “MeitY-notified” data centres. However, any revenue earned from Indian customers must be routed through a local Indian reseller entity, which remains subject to standard Indian corporate tax. This move has fundamentally changed the math for hyperscalers (such as Amazon, Google, Microsoft, Meta) and colocation providers (Equinix, Digital Realty, Yotta etc.). Before the budget there were about $70B worth of projects in pipeline and after the budget about $90B were recorded. The tax holiday makes India more attractive than the traditional hubs like Singapore, which faces land/power constraints or Dublin.

Crowding-out Effect on Funding

There has been a significant “Great Reallocation” of capital and the definition of a “venture-scale” startup has shifted. The massive CapEx for Giga scale projects has created a polarized investment landscape with AI startups captured 35% of all global venture capital in the most recent funding cycle. Investors have largely stopped funding “AI wrappers” (simple apps built on top of LLMs). Instead, the money is flowing into Infrastructure (power, chips, data centres) and Vertical AI (highly specialized models for law, medicine or engineering). High-interest borrowing by the biggies has also tightened the overall credit market, making it harder for small, non-AI startups to get cheaper loans.

However, AI is accelerating and helping the scientific discoveries much more rapidly. A few examples are given here:

In mid-March 2026, the collaboration between Google DeepMind, NVIDIA and EMBL-EBI achieved a milestone that has been called “the completion of the biological periodic table”. While AlphaFold 2 predicted the shape of a protein, AlphaFold 3 (running on NVIDIA’s latest Blackwell clusters) predicts the interaction. It can model how a protein binds to DNA, RNA and ligands (small molecules). For the first time, researchers can see the “lock and key” mechanism of viral entry into human cells in 3D before doing a single wet-lab experiment. This is the Human Interactome—a map of every conversation occurring between molecules in our bodies.

The massive investments in compute are also fundamentally changing the Probability of Technical Success (PTS) in medicine. Traditionally, finding a ‘lead compound’ i.e. a potential drug candidate took 3–5 years. In early 2026, AI-native bio-techs like Isomorphic Labs and Insilico Medicine have started consistently hitting this milestone in 13–18 months. Multiple AI-designed drugs for Idiopathic Pulmonary Fibrosis (IPF) and Solid Tumors are in Phase III trials right now. If these succeed by late 2026, it will prove that AI doesn’t just find more drugs, but better drugs that are less likely to fail in humans.

AI is now used to “twin” patients (digital twin!) – creating digital models of how a specific person might react to a drug based on their genome, which is helping to optimize clinical trial enrolment and reduce the “90% failure rate” that has plagued pharma for decades.

The University of New Hampshire (UNH) breakthrough, published in Nature Communications in February 2026, represents a fundamental shift in how we “mine” human knowledge to solve physical engineering problems, by building the Northeast Materials Database (NEMAD),a repository of 67,573 magnetic compounds and thereon researchers identified 25 entirely new high-temperature magnets.

Traditional magnets lose their “pull” as they get hot. For an EV motor or wind turbine, a material must stay magnetic at temperatures exceeding 150°C to 200°C. The 25 new compounds identified by the UNH AI were specifically selected because they maintain their magnetic properties at these extreme thresholds. Currently Neodymium is used. Extracting rare earths is infamously toxic and they geopolitical significance.

While the specific chemical formulas of the 25 new compounds are currently being shielded for patent and further testing, early data suggests they utilize more abundant elements like Iron, Cobalt, and Manganese in unique crystal geometries that mimic the strength of rare earths without the actual rare-earth atoms. Traditionally, discovering these 25 materials would have required 50 years of trial-and-error lab work. The AI did it in weeks by “reading” decades of unstructured scientific papers and predicting which combinations humans had missed.

While the AI discovery is a massive leap, the materials must now survive the “Valley of Death” between a database and a factory. So the funding has specialized with generic software startups struggling to find capital and deep tech startups that combine AI with biology, materials, robotics or other real world areas more effectively are receiving the largest Series A and B rounds in history.

Next Part IV: Vibe Coding and the Paradox of Democratization