By Dhanyasri A, Virtual Intern, (B.E. Comp. Sc., Final Year)

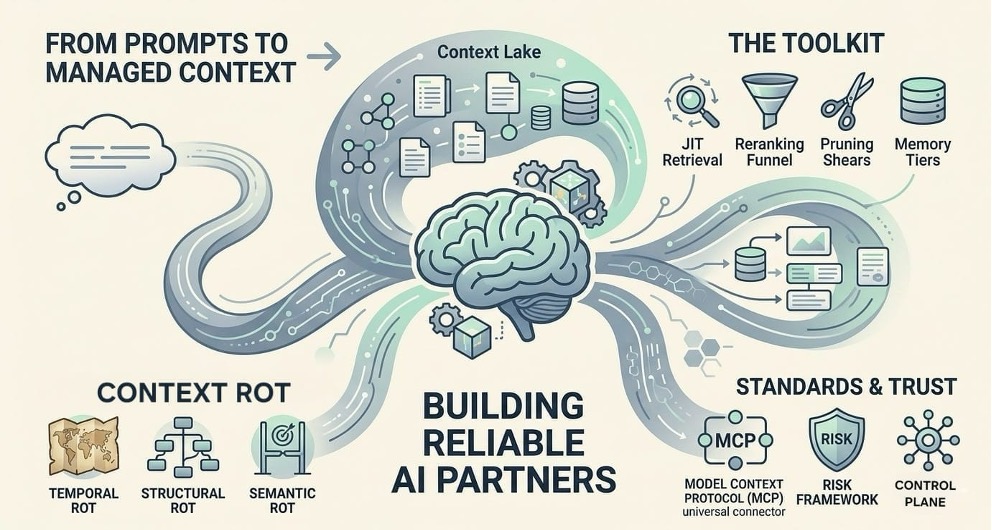

From Simple Prompts to Managed Context

The world of AI is moving away from just “prompt engineering”, the art of picking the right words, to a more serious discipline called Context Engineering. Think of prompt engineering as a bit of “word magic” that worked for early models but is not sufficient enough by itself for big businesses. Context engineering, on the other hand, is the professional way we design and organize the information an AI sees. It is about making sure the model actually knows the surrounding information of what you want and gives you useful results. To distinguish these better, it helps to think of Prompt Engineering as the ‘Command’ and Context Engineering as the appropriate and precise “Knowledge Base” for AI.

In professional AI development, we are seeing that Prompt Engineering has become a baseline skill, while Context Engineering is becoming the high-value discipline. This shift is happening because we have realized that an AI’s performance depends less on how many billions of parameters it has and more on the quality of the “context” it is given right when it needs to do a job. Leaders in the industry are now saying that giving AI the right context is the most important skill for building tools people can actually trust.

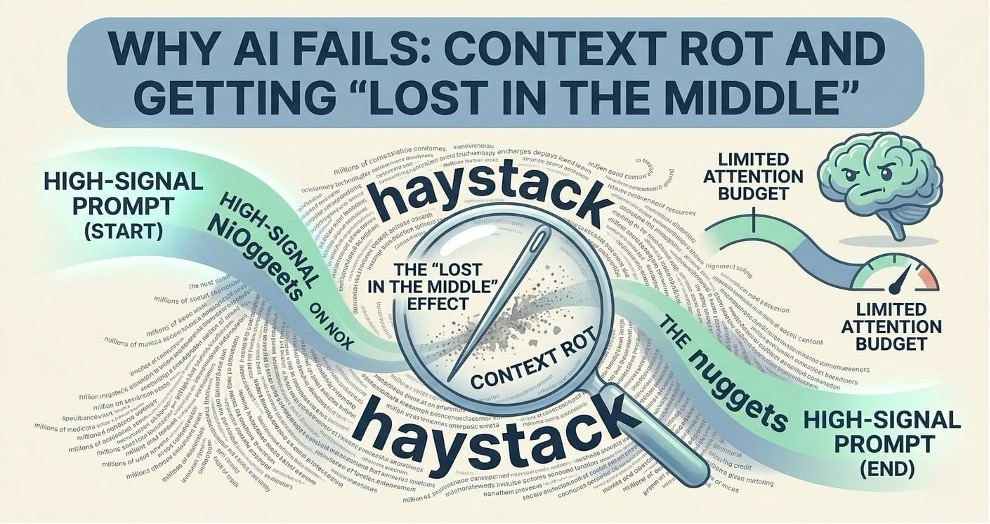

Why AI Fails: Context Rot and Getting “Lost in the Middle”

Even though modern AI can now “read” millions of words at once, a strange problem has popped up: the more info you give it, the worse it often performs. This is what we call Context Rot. It happens because of how AI models are built. They have a limited “attention budget”. When you flood them with too much data, that attention gets stretched thin.

The “Lost in the Middle” Problem

Research shows that if you put an important fact at the very beginning (primacy) or the very end (recency) of a long document, the AI usually finds it. But if that ‘needle’ of information is buried in the middle of a massive ‘haystack’ of text, the AI often misses it entirely. This is caused by a natural bias in how these models are trained. The U-Shaped performance curve remains a persistent hurdle for engineers. While the recent models have significantly improved their “Needle in a Haystack” scores, often hitting 99% on simple retrieval, they still struggle with Multi-Needle Reasoning.

Types of Contextual Decay

In a business setting, this “rot” usually shows up in three ways:

- Temporal Rot: This is when the AI uses old, out-of-date info to make a decision today. It is like trying to navigate a city using a map from ten years ago.

- Structural Rot: This happens when the relationships between things change. For example, like when a company reshuffles its departments, but the AI still thinks the old hierarchy is in place.

- Semantic Rot: This is the most subtle version. The data itself has not changed, but what it means to the business has changed because the company’s goals have shifted.

The Cost of “Wheel-Spin”

Context rot isn’t just a technical glitch, it’s expensive. When an AI agent gets stuck looking at old or messy data, it enters a “wheel-spin” cycle. It keeps re-planning and retrying the same task over and over. This wastes money on computing costs and slows everything down without actually getting the job done.

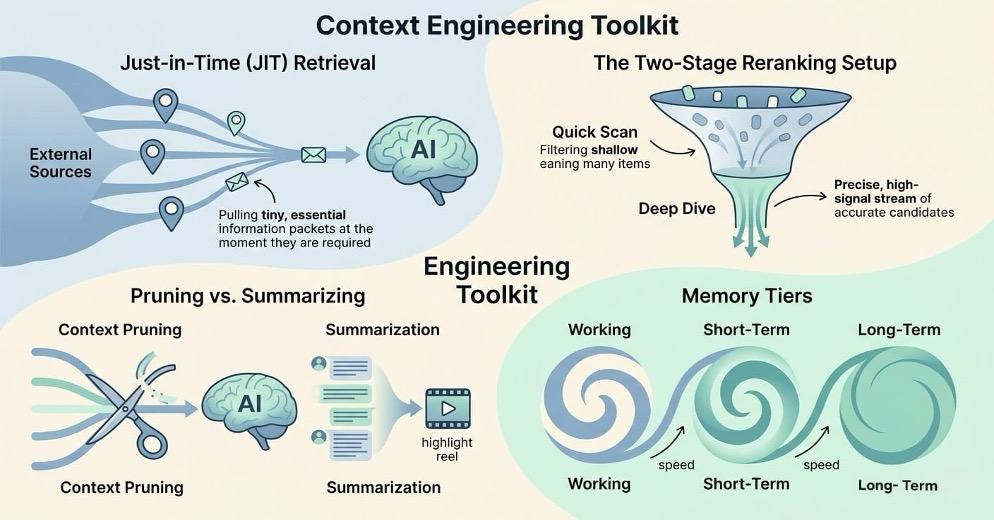

The Context Engineering Toolkit: How We Fix It

To stop the AI from getting overwhelmed, engineers use a few different strategies to make sure only the most important “high-signal” info reaches the model.

- Just-in-Time (JIT) Retrieval

Instead of dumping a huge dataset into the AI all at once, we can use JIT retrieval. This means the system holds onto small “pointers” to the data and only loads the specific parts it needs at the exact moment it needs to think through a problem. - The Two-Stage Reranking Setup

Most basic search systems find things that are “similar” but not necessarily “right.” To fix this, we can use a two-step process:

- The Quick Scan: A fast tool grabs the top 50 or 100 likely candidates.

- The Deep Dive: A more powerful model re-checks those candidates to pick the best ones. This simple change can make the AI’s answers to be about 33-40% more accurate.

- Pruning vs. Summarizing

There are two main ways to stay under that “attention budget”: - Context Pruning: This is basically a filter. It cuts out any sentences or bits of data that don’t help answer the question before the AI even sees them.

- Summarization: This shrinks down long conversations into a “highlight reel”. It helps the AI remember the “gist” of a long chat, though there’s a small risk of losing tiny details that might matter later.

- Memory Tiers

Modern AI systems manage memory a lot like a computer does. They have Working Memory for what’s happening right now, Short-Term Memory for the current session and Long-Term Memory for permanent records. This keeps the AI from getting “flooded” while still letting it remember important facts over time.

Setting the Standard: The Model Context Protocol (MCP)

By 2026, the Model Context Protocol (MCP) had established itself as the industry’s standard. Overseen by the Agentic AI Foundation, it functions as a sort of “universal language” for AI. This protocol enables various AI agents to interact with tools, APIs and databases, eliminating the need for configurations for each individual connection. It has become so popular that it’s now the standard way we deliver context to AI systems everywhere.

Keeping Things Safe: The Context Control Plane

As AI agents get more independent, the companies need a way to manage them. This is done through a Context Control Plane. Think of it as a control room that decides what data and permissions the AI is allowed to have.

The Three R’s of Management

To be reliable, a system needs to follow three rules:

- Relevance: The info must be up-to-date and actually related to the task.

- Reliability: We need to know exactly where the data came from.

- Retention: AI needs to be able to build up “institutional knowledge” over time.

Using the NIST Framework

We also use the NIST AI Risk Management Framework to keep things on track. This involves:

- Governing: Creating a culture where people are accountable for how the AI behaves.

- Mapping: Figuring out where the AI might have biases or blind spots.

- Measuring: Tracking how often the AI gets things right or makes things up.

- Managing: Fixing problems like “data drift” before they cause issues.

Conclusion: Context Is the New Currency

Looking ahead to the end of 2026, the real difference between a successful AI project and a failure won’t be the size of the model. It will be the quality of the context. The companies that win will be the ones that treat context as a top priority, building “Context Lakes” and using tools like MCP to keep their AI smart. We’re moving from a world where we just “talk” to AI to a world where AI truly understands the complex setting it’s working in. It’s the final step in making AI a reliable partner instead of just a fancy chatbot.

Please also read my co-authored paper on “ML Assisted Diagnosis for Parkinson’s Disease” published by IEEE at:

https://doi.org/10.1109/ACOIT66109.2025.11436864